The “Distillation” Method Shaking Technological Sovereignty

The U.S. State Department has named Chinese AI companies like DeepSeek, raising concerns about the “large-scale distillation” of American AI models. This is not just an international political issue. For business leaders leveraging AI within their own companies, it’s a moment to fundamentally rethink “how” technology is procured and the associated “security risks.”

“Model distillation” is a technique where a large number of queries are sent to an existing high-performance AI model, and its outputs are used as training data to build a smaller model. While this contributes to democratizing R&D by allowing low-cost imitation of cutting-edge models developed by OpenAI or Google, it also carries the risk of infringing on the original developers’ intellectual property rights and terms of service.

What business leaders need to grasp is that this issue goes beyond the ethical and regulatory realm of “technology theft” and directly impacts their own AI adoption strategy. The risk of unknowingly using regulated technology after jumping on a cheap AI model is becoming very real.

The Business Risks of Distillation

Model distillation itself is a common technique in AI research. The problem arises when it’s done on a “large scale” and “without permission.” While it’s unclear exactly how much distillation DeepSeek performed, the U.S. State Department’s heightened vigilance stems from the following business risks.

Supply Chain Risks Becoming Apparent

Companies must thoroughly investigate which models their AI tools and APIs are based on. If your systems rely on a model that becomes subject to sanctions, you risk sudden service interruptions or legal action.

For example, suppose you adopt a Chinese-made AI translation tool. If it was built by distilling a U.S. model without permission, it could become a target of U.S. sanctions, rendering the tool unusable. This is not just a “technology selection” issue; it’s a management challenge directly tied to your Business Continuity Plan (BCP).

Increased Data Leakage Risks

Distilled models may “remember” some of the data the original model was trained on. This means that inputting your company’s confidential information into a distilled model could create a pathway for that information to leak into another company’s model. This risk is especially critical when using AI for highly sensitive tasks like contract review or customer data analysis.

Personally, when automating AI contract checks, I always verify the origin of the model used by the AI tool. For major vendors like Claude or ChatGPT, the training data and terms of service are clear, but this is often not the case with newer, cheaper tools.

Three Actions Business Leaders Should Take Now

Don’t dismiss this news as “a story from a faraway country.” Here are concrete actions to integrate these lessons into your own AI adoption process.

1. Audit the “Provenance” of Your AI Tools

For AI tools you currently use or are considering, check the following:

- What is the base model? (OpenAI, Google, Meta, Chinese-made, etc.)

- Are “model distillation” prohibitions clearly stated in the terms of service?

- Where is the data stored and where are the processing servers located?

- Does it have third-party audits or security certifications (e.g., SOC2, ISO27001)?

Especially for free or extremely cheap AI tools, don’t judge solely on cost. Scrutinize them from the perspectives above. A difference of a few thousand yen per month could create a risk of hundreds of millions in losses.

2. Make “Technological Transparency” a Criterion for In-House Development

The shift from SaaS to in-house development is accelerating, and “technological transparency” should be added to your decision-making criteria. SaaS that relies on external AI models is directly affected by the vendor’s technology choices and regulatory changes. In contrast, building your own system based on an open-source model (like Llama 3) gives you complete control over the model’s provenance and training data.

The initial cost of in-house development is roughly ¥100,000–¥300,000 per month (approx. $700–$2,100), covering cloud API fees and engineer hours. However, in the long run, it’s a worthwhile investment for avoiding vendor lock-in and hedging risks. Among my clients, an increasing number of companies have stopped using Chinese-made AI tools and chosen to host Llama 3 on their own servers.

3. Add “AI Security Clauses” to Your Procurement Policy

Add security clauses related to AI tools to your company’s IT procurement policy. Here are some specific examples:

- The vendor must disclose the name and version of the AI model used.

- The vendor must guarantee that your company’s data is not included in the model’s training data.

- Model distillation or repurposing is prohibited.

- The tool must comply with AI regulations in the U.S., EU, and Japan.

It is recommended to work with your legal department to incorporate these clauses into contracts. Especially when dealing with overseas vendors, be sure to also check the governing law and dispute resolution clauses.

Beyond the Cost-Security Trade-off

The model distillation issue highlights the trade-off between “cost” and “security” in AI adoption. Cheap models are attractive, but business leaders need the insight to identify the hidden risks.

I personally achieved a reduction of 1,550 hours per year through AI adoption in my own company, but I went through three tool changes in the process. The first tool I chose based on cost later revealed data leakage risks, and the migration cost a significant amount. From this experience, I can say that AI tool selection should be based on “Total Cost of Ownership (TCO),” which must include “risk response costs.”

To give you a sense of costs, a safe AI tool typically costs ¥2,000–¥5,000 (approx. $14–$35) per user per month. In contrast, a high-risk tool might be free or cost ¥1,000 (approx. $7) per month, but the legal costs in the event of a problem can run into the millions of yen. The question for business leaders is how to evaluate this difference.

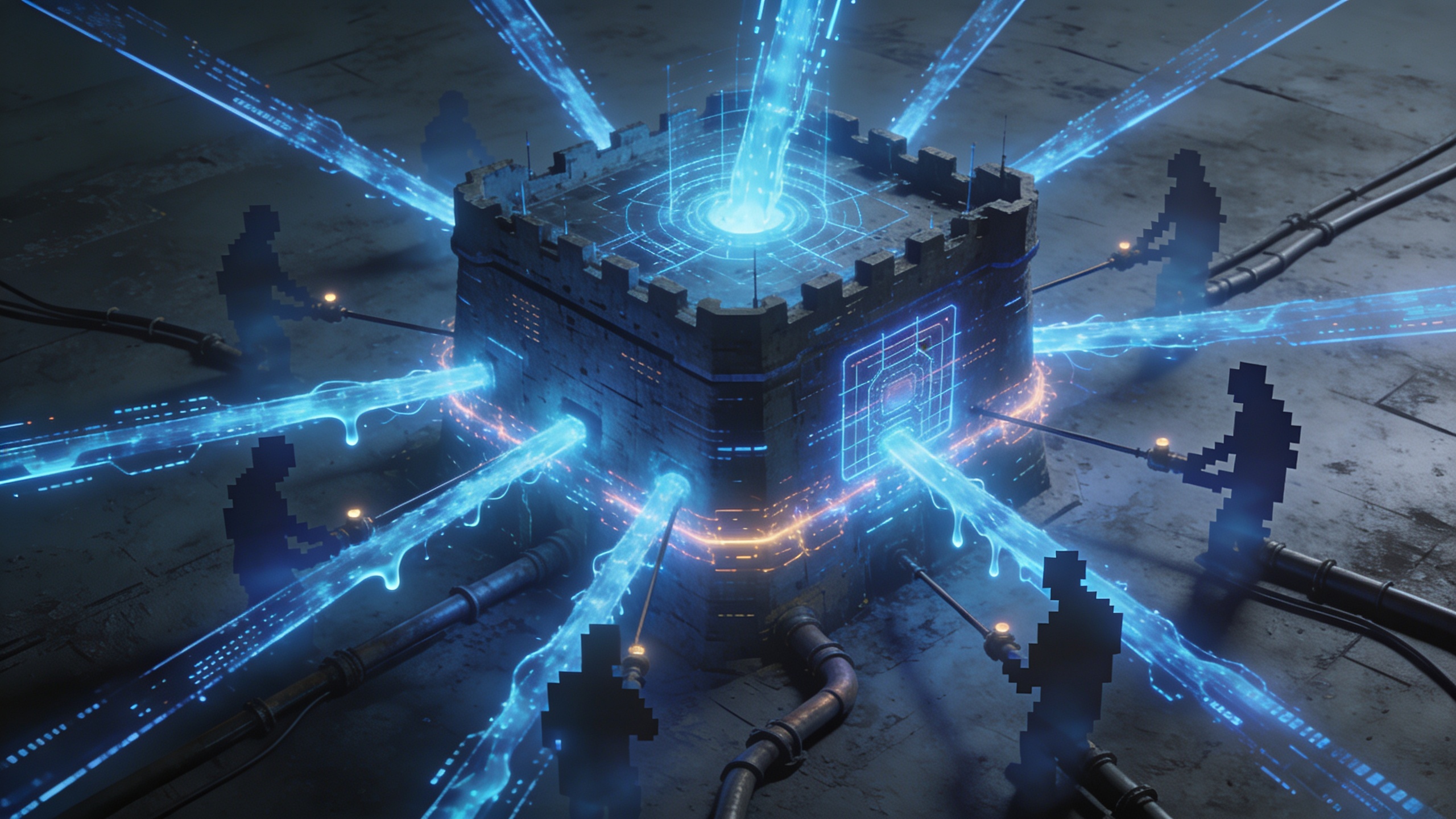

Summary: “Visualizing” Technology Procurement Determines Competitiveness

The U.S. State Department’s warning shows that “visualization” is essential in technology procurement in the AI era. Managing a business that depends on black-box AI models leaves you vulnerable to risks that could materialize at any time.

When advancing AI adoption in your company, always keep these three points in mind:

- Understand the “inner workings” of the AI you are using.

- Evaluate the balance of risk and cost using TCO.

- Have a procurement strategy that includes in-house development as an option.

The more technology is democratized, the more a company’s competitiveness depends on its management’s ability to correctly evaluate the “provenance” of that technology. Why not take this news as an opportunity to review your own company’s AI procurement process?

Comments